This blog post is a version of a talk I gave at the 2018 ACM Designing Interactive Systems (DIS) Conference based on a paper written with Nick Merrill and John Chuang, entitled When BCIs have APIs: Design Fictions of Everyday Brain-Computer Interface Adoption. Find out more on our project page, or download the paper: [PDF link] [ACM link]

In recent years, brain computer interfaces, or BCIs, have shifted from far-off science fiction, to medical research, to the realm of consumer-grade devices that can sense brainwaves and EEG signals. Brain computer interfaces have also featured more prominently in corporate and public imaginations, such as Elon Musk’s project that has been said to create a global shared brain, or fears that BCIs will result in thought control.

Most of these narratives and imaginings about BCIs tend to be utopian, or dystopian, imagining radical technological or social change. However, we instead aim to imagine futures that are not radically different from our own. In our project, we use design fiction to ask: how can we graft brain computer interfaces onto the everyday and mundane worlds we already live in? How can we explore how BCI uses, benefits, and labor practices may not be evenly distributed when they get adopted?

Brain computer interfaces allow the control of a computer from neural output. In recent years, several consumer-grade brain-computer interface devices have come to market. One example is the Neurable – it’s a headset used as an input device for virtual reality systems. It detects when a user recognizes an object that they want to select. It uses a phenomenon called the P300 – when a person either recognizes a stimulus, or receives a stimulus they are not expecting, electrical activity in their brain spikes approximately 300 milliseconds after the stimulus. This electrical spike can be detected by an EEG, and by several consumer BCI devices such as the Neurable. Applications utilizing the P300 phenomenon include hands-free ways to type or click.

Demo video of a text entry system using the P300

Neurable demonstration video

We base our analysis on this already-existing capability of brain computer interfaces, rather than the more fantastical narratives (at least for now) of computers being able to clearly read humans’ inner thoughts and emotions. Instead, we create a set of scenarios that makes use of the P300 phenomenon in new applications, combined with the adoption of consumer-grade BCIs by new groups and social systems.

Stories about BCI’s hypothetical future as a device to make life easier for “everyone” abound, particularly in Silicon Valley, as shown in recent research. These tend to be very totalizing accounts, neglecting the nuance of multiple everyday experiences. However, past research shows that the introductions of new digital technologies end up unevenly shaping practices and arrangements of power and work – from the introduction of computers in workplaces in the 1980s, to the introduction of email, to forms of labor enabled algorithms and digital platforms. We use a set of a design fictions to interrogate these potential arrangements in BCI systems, situated in different types of workers’ everyday experiences.

Design Fictions

Design fiction is a practice of creating conceptual designs or artifacts that help create a fictional reality. We can use design fiction to ask questions about possible configurations of the world and to think through issues that have relevance and implications for present realities. (I’ve written more about design fiction in prior blog posts).

We build on Lindley et al.’s proposal to use design fiction to study the “implications for adoption” of emerging technologies. They argue that design fiction can “create plausible, mundane, and speculative futures, within which today’s emerging technologies may be prototyped as if they are domesticated and situated,” which we can then analyze with a range of lenses, such as those from science and technology studies. For us, this lets us think about technologies beyond ideal use cases. It lets us be attuned to the experiences of power and inequalities that people experience today, and interrogate how emerging technologies might get uptaken, reused, and reinterpreted in a variety of existing social relations and systems of power.

To explore this, we thus created a set of interconnected design fictions that exist within the same fictional universe, showing different sites of adoptions and interactions. We build on Coulton et al.’s insight that design fiction can be a “world-building” exercise; design fictions can simultaneously exist in the same imagined world and provide multiple “entry points” into that world.

We created 4 design fictions that exist in the same world: (1) a README for a fictional BCI API, (2) a programmer’s question on StackOverflow who is working with the API, (3) an internal business memo from an online dating company, (4) a set of forum posts by crowdworkers who use BCIs to do content moderation tasks. These are downloadable at our project page if you want to see them in more detail. (I’ll also note that we conducted our work in the United States, and that our authorship of these fictions, as well as interpretations and analysis are informed by this sociocultural context.)

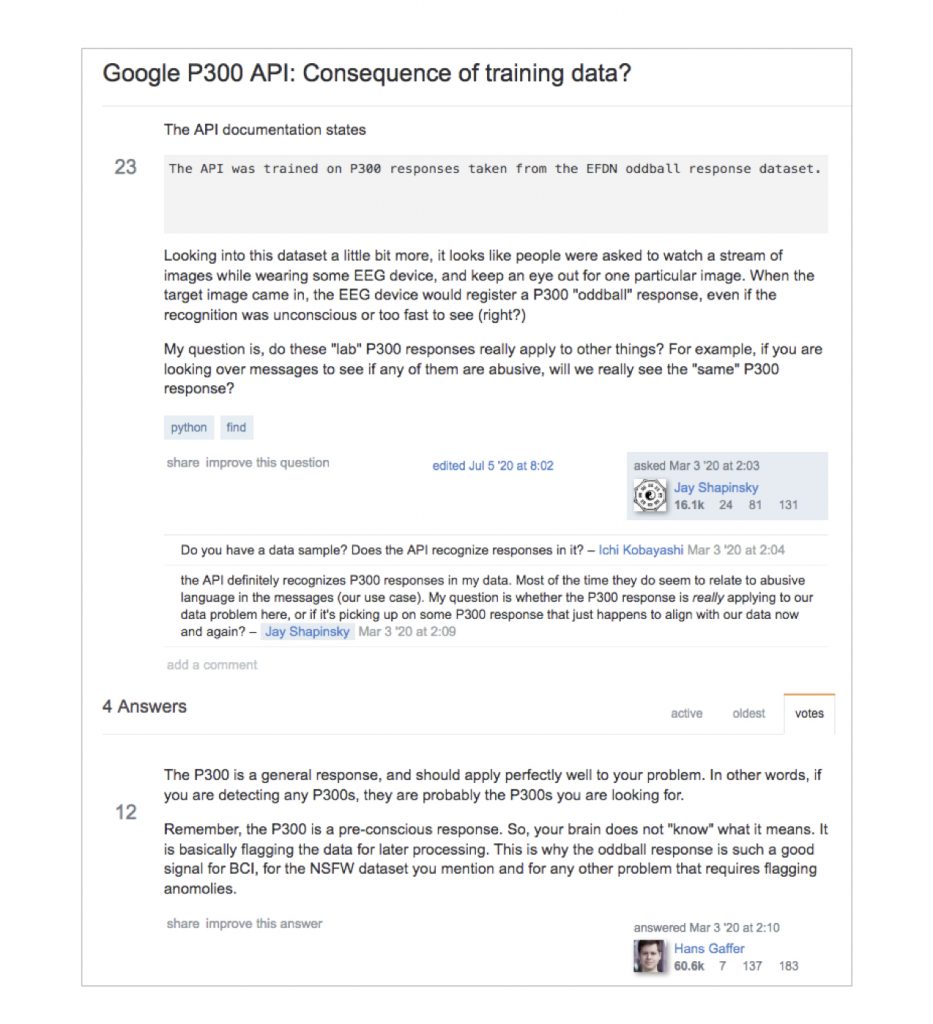

First, this is README documentation of an API for identifying P300 spikes in a stream of EEG signals. The P300 response, or “oddball” response is a real phenomenon. It’s a spike in brain activity when a person is either surprised, or when see something that they’re looking for. This fictional API helps identify those spikes in EEG data. We made this fiction in the form of a GitHub page to emphasize the everyday nature of this documentation, from the viewpoint of a software developer. In the fiction, the algorithms underlying this API come from a specific set of training data from a controlled environment in a university research lab. The API discloses and openly links to the data that its algorithms were trained on.

In our creation and analysis of this fiction, for us it surfaces ambiguity and a tension about how generalizable the system’s model of the brain is. The API with a README implies that the system is meant to be generalizable, despite some indications based on its training dataset that it might be more limited. This fiction also gestures more broadly toward the involvement of academic research in larger technical infrastructures. The documentation notes that the API started as a research project by a professor at a University before becoming hosted and maintained by a large tech company. For us, this highlights how collaborations between research and industry may produce artifacts that move into broader contexts. Yet researchers may not be thinking about the potential effects or implications of their technical systems in these broader contexts.

Second, a developer, Jay, is working with the BCI API to develop a tool for content moderation. He asks a question on Stack Overflow, a real website for developers to ask and answer technical questions. He questions the API’s applicability beyond lab-based stimuli, asking “do these ‘lab’ P300 responses really apply to other things? If you are looking over messages to see if any of them are abusive, will we really see the ‘same’ P300 response?” The answers from other developers suggest that they predominantly believe the API is generalizable to a broader class of tasks, with the most agreed-upon answer saying “The P300 is a general response, and should apply perfectly well to your problem.”

This fiction helps us explore how and where contestation may occur in technical communities, and where discussion of social values or social implications could arise. We imagine the first developer, Jay, as someone who is sensitive to the way the API was trained, and questions its applicability to a new domain. However, he encounters the commenters who believe that physiological signals are always generalizable, and don’t engage in questions of broader applicability. The community’s answers re-enforce notions not just of what the technical artifacts can do, but what the human brain can do. The stack overflow answers draw on a popular, though critiqued, notion of the “brain-as-computer,” framing the brain as a processing unit with generic processes that take inputs and produce outputs. Here, this notion is reinforced in the social realm on Stack Overflow.

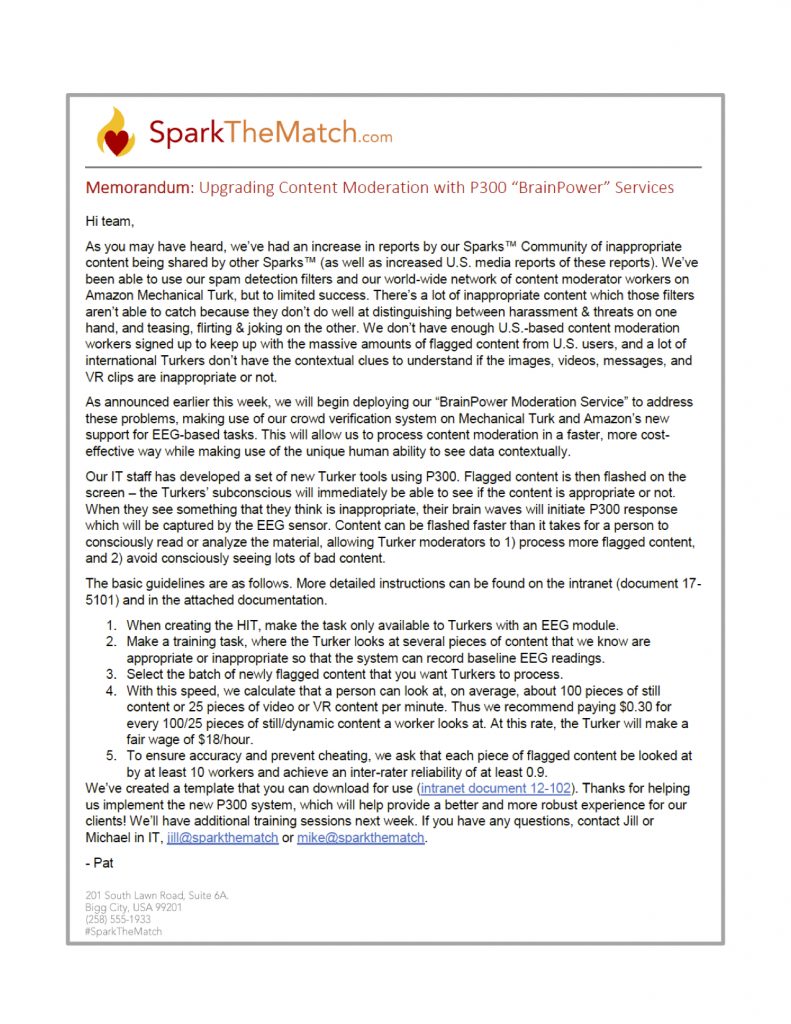

Meanwhile, SparkTheMatch.com, a fictional online dating service, is struggling to moderate and manage inappropriate user content on their platform. SparkTheMatch wants to utilize the P300 signal to tap into people’s tacit “gut feelings” to recognize inappropriate content. They are planning to implement a content moderation process using crowdsourced workers wearing BCIs.

In creating this fiction, we use the memo to provide insight into some of the practices and labor supporting the BCI-assisted review process from the company’s perspective. The memo suggests that the use of BCIs with Mechanical Turk will “help increase efficiency” for crowdworkers while still giving them a fair wage. The crowdworkers sit and watch a stream of flashing content, while wearing a BCI and the P300 response will subconsciously identity when workers recognize supposedly abnormal content. Yet we find it debatable whether or not this process improves the material conditions of the Turk workers. The amount of content to look at in order to make the supposedly fair wage may not actually be reasonable.

SparkTheMatch employees creating the Mechanical Turk tasks don’t directly interact with the BCI API. Instead they use pre-defined templates created by the company’s IT staff, a much more mediated interaction compared to the programmers and developers reading documentation and posting on Stack Overflow. By this point, the research lab origins of the P300 API underlying the service and questions about its broader applicability are hidden. From the viewpoint of SparkTheMatch staff, the BCI-aspects of their service just “works,” allowing managers to design their workflows around it, obfuscating the inner workings of the P300 API.

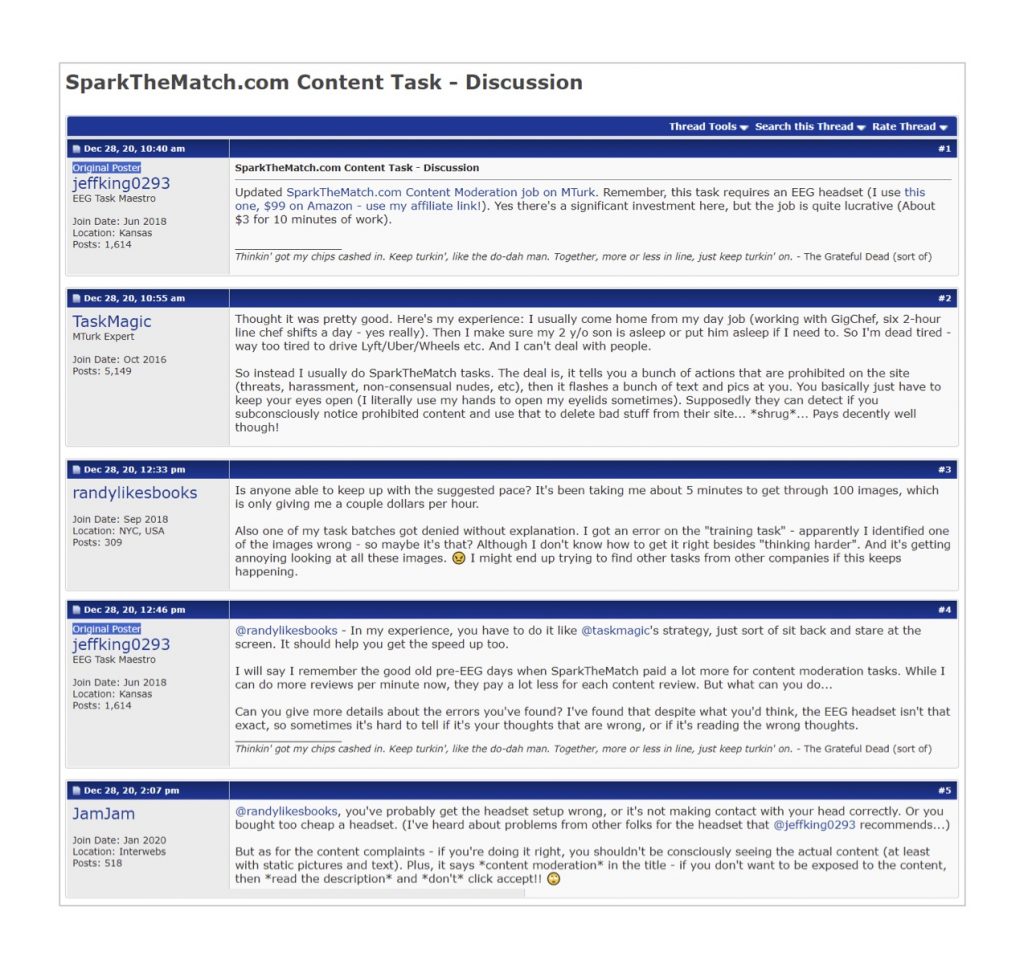

Fourth, the Mechanical Turk workers who do the SparkTheMatch content moderation work, share their experiences on a crowdworker forum. These crowd workers’ experiences and relationships to the P300 API is strikingly different from the people and organizations described in the other fictions—notably the API is something that they do not get to explicitly see. Aspects of the system are blackboxed or hidden away. While one poster discusses some errors that occurred, there’s ambiguity about whether fault lies with the BCI device or the data processing. EEG signals are not easily human-comprehensible, making feedback mechanisms difficult. Other posters blame the user for the errors. Which is problematic, given the preciousness of these workers’ positions, as crowd workers tend to have few forms of recourse when encountering problems with tasks.

For us, these forum accounts are interesting because they describe a situation in which the BCI user is not the person who obtains the real benefits of its use. It’s the company SparkTheMatch, not the BCI-end users, that is obtaining the most benefit from BCIs.

Some Emergent Themes and Reflections

From these design fictions, several salient themes arose for us. By looking at BCIs from the perspective of several everyday experiences, we can see different types of work done in relation to BCIs – whether that’s doing software development, being a client for a BCI-service, or using the BCI to conduct work. Our fictions are inspired by others’ research on the existing labor relationships and power dynamics in crowdwork and distributed content moderation (in particular work by scholars Lilly Irani and Sarah T. Roberts). Here we also critique utopian narratives of brain-controlled computing that suggest BCIs will create new efficiencies, seamless interactions, and increased productivity. We investigate a set of questions on the role of technology in shaping and reproducing social and economic inequalities.

Second, we use the design fiction to surface questions about the situatedness of brain sensing, questioning how generalizable and universal physiological signals are. Building on prior accounts of situated actions and extended cognition, we note the specific and the particular should be taken into account in the design of supposedly generalizable BCI systems.

These themes arose iteratively, and were somewhat surprising for us, particularly just how different the BCI system looks like from each of the different perspectives in the fictions. We initially set out to create a rather mundane fictional platform or infrastructure, an API for BCIs. With this starting point we brainstormed other types of direct and indirect relationships people might have with our BCI API to create multiple “entry points” into our API’s world. We iterated on various types of relationships and artifacts—there are end-users, but also clients, software engineers, app developers, each of whom might interact with an API in different ways, directly or indirectly. Through iterations of different scenarios (a BCI-assisted tax filing service was thought of at one point), and through discussions with our colleagues (some of whom posed questions about what labor in higher education might look like with BCIs), we slowly began to think that looking at the work practices implicated in these different relationships and artifacts would be a fruitful way to focus our designs.

Toward “Platform Fictions”

In part, we think that creating design fictions in mundane technical forms like documentation or stack overflow posts might help the artifacts be legible to software engineers and technical researchers. More generally, this leads us to think more about what it might mean to put platforms and infrastructures at the center of design fiction (as well as build on some of the insights from platform studies and infrastructure studies). Adoption and use does not occur in a vacuum. Rather, technologies get adopted into and by existing sociotechnical systems. We can use design fiction to open the “black boxes” of emerging sociotechnical systems. Given that infrastructures are often relegated to the background in everyday use, surfacing and focusing on an infrastructure helps us situate our design fictions in the everyday and mundane, rather than dystopia or utopia.

We find that using a digital infrastructure as a starting point helps surface multiple subject positions in relation to the system at different sites of interaction, beyond those of end-users. From each of these subject positions, we can see where contestation may occur, and how the system looks different. We can also see how assumptions, values, and practices surrounding the system at a particular place and time can be hidden, adapted, or changed by the time the system reaches others. Importantly, we also try to surface ways the system gets used in potentially unintended ways – we don’t think that the academic researchers who developed the API to detect brain signal spikes imagined that it would be used in a system of arguably exploitative crowd labor for content moderation.

Our fictions try to blur clear distinctions that might suggest what happens in “labs,” is separate from the “the outside world”, instead highlighting their entanglements. Given that much of BCI research currently exists in research labs, we raise this point to argue that BCI researchers and designers should also be concerned about the implications of adoption and application. This helps gives us insight into the responsibilities (and complicitness) of researchers and builders of technical systems. Some of the recent controversies around Cambridge Analytica’s use of Facebook’s API points to ways in which the building of platforms and infrastructures isn’t neutral, and that it’s incumbent upon designers, developers, and researchers to raise issues related to social concerns and potential inequalities related to adoption and appropriation by others.

Concluding Thoughts

This work isn’t meant to be predictive. The fictions and analysis present our specific viewpoints by focusing on several types of everyday experiences. One can read many themes into our fictions, and we encourage others to do so. But we find that focusing on potential adoptions of an emerging technology in the everyday and mundane helps surface contours of debates that might occur, which might not be immediately obvious when thinking about BCIs – and might not be immediately obvious if we think about social implications in terms of “worst case scenarios” or dystopias. We hope that this work can raise awareness among BCI researchers and designers about social responsibilities they may have for their technology’s adoption and use. In future work, we plan to use these fictions as research probes to understand how technical researchers envision BCI adoptions and their social responsibilities, building on some of our prior projects. And for design researchers, we show that using a fictional platform in design fiction can help raise important social issues about technology adoption and use from multiple perspectives beyond those of end-users, and help surface issues that might arise from unintended or unexpected adoption and use. Using design fiction to interrogate sociotechnical issues present in the everyday can better help us think about the futures we desire.

Crossposted with Richmond’s blog, The Bytegeist

[…] Crossposted with the UC Berkeley BioSENSE Blog […]